|

I'm Yan Hao, a computer science master student at ETH Zürich. Before that, I received the B.S. degree at ACM Honors Class, Zhiyuan College, Shanghai Jiao Tong University (SJTU). I am interested in machine learning and computer vision, especially 3D vision. At ETH, I contributed to a 3D Vision project supervised by Prof. Dr. Iro Armeni. My master thesis was done at EPFL under the supervision of Dr. Florent Forest and Prof. Dr. Olga Fink. Email / Github / Google Scholar / CV |

|

|

|

|

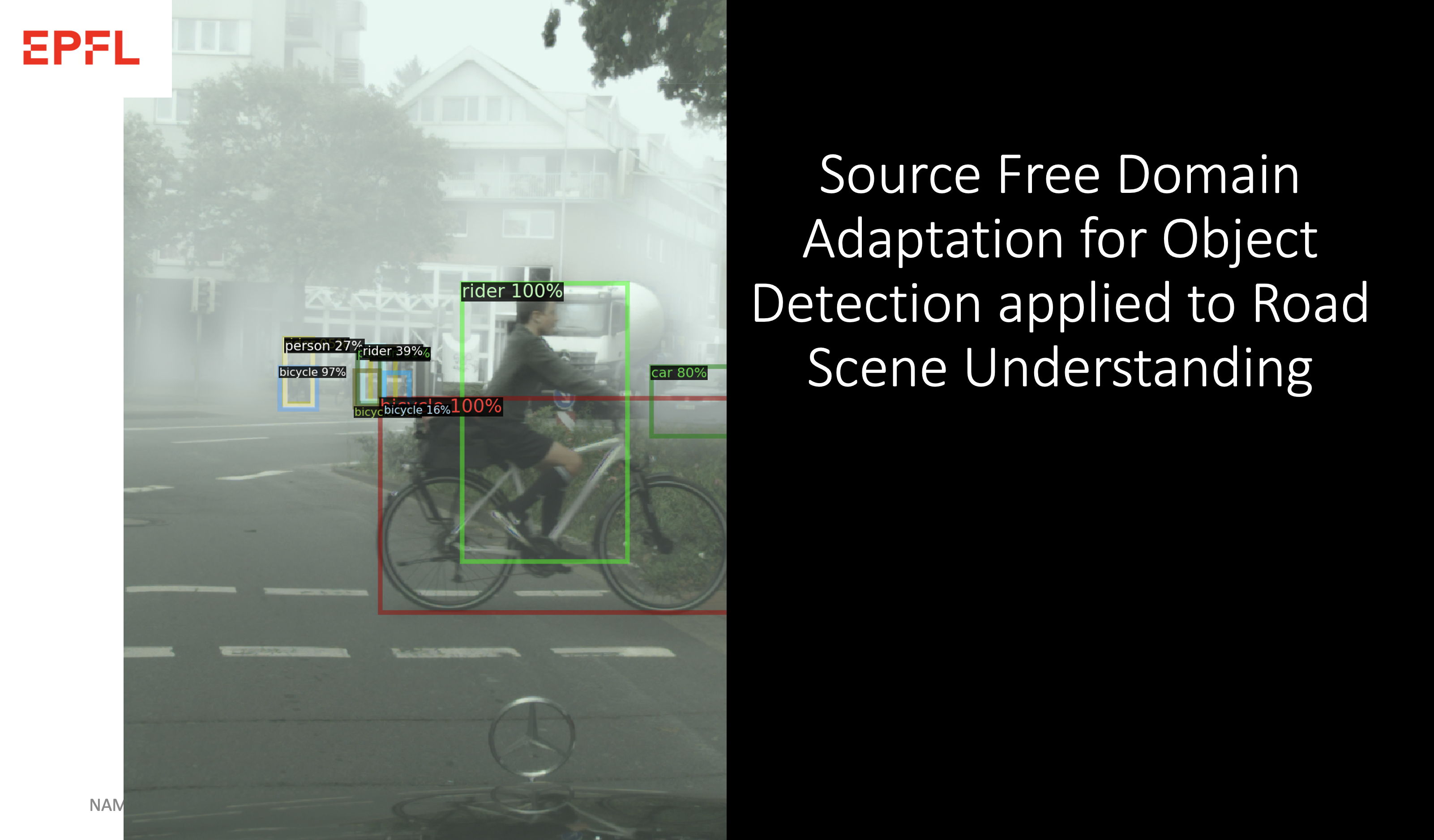

Master Thesis, EPFL, Switzerland

Nov. 2022 - June. 2023 |

|

|

Master in Computer Science, ETH Zürich, Switzerland

Sep. 2020 - Present |

|

|

B.S. in Computer Science, Shanghai Jiao Tong University

ACM Honors Class, supervised by Prof. Yong Yu. Sept. 2016 to Jun. 2020 |

|

|

|

|

Research Intern, Amazon AWA Shanghai AI Lab

Supervised by Tong He and Tianjun Xiao. Jun. 2020 to Sept. 2020 |

|

|

|

|

Yan Hao*, Florent Forest*, Olga Fink We propose a new source-free domain adaptation method for object detection applied to road scene understanding. Implementation bases on Detectron2 and Pytorch. Achieve state-of-the-art results for the adaptation from Cityscapes to Cityscapes Foggy and from Sim10k to Cityscapes. Master Thesis. In preparation for conference submission. |

|

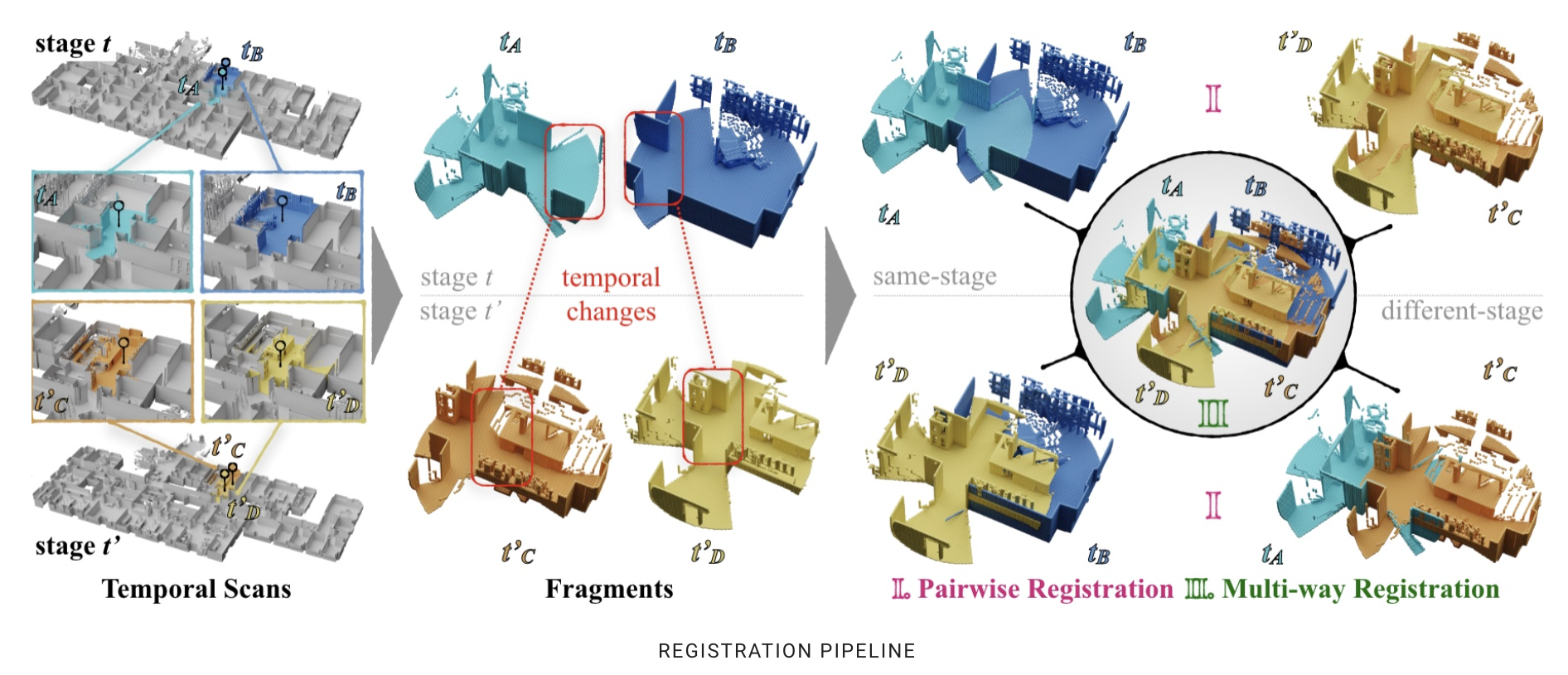

Tao Sun, Yan Hao, Shengyu Huang, Silvio Savarese, Konrad Schindler, Marc Pollefeys, Iro Armeni We propose a new spatiotemporal dataset and benchmark called NSS (Nothing Stands Still) on 3D point cloud registration under large geometric change across temporal stages. Accepted at CVPR 2023 DEMO. In submission. |

|

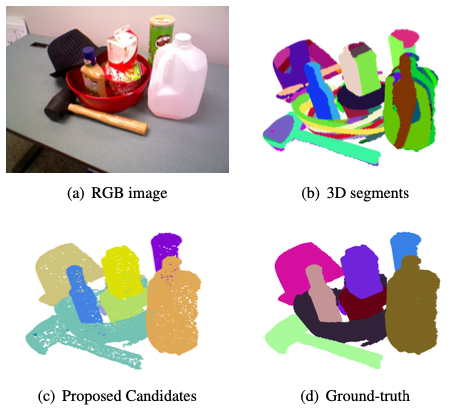

Zelin Ye, Yan Hao, Liang Xu, Rui Zhu, Cewu Lu We propose a robust 3D objectness estimation method in a bottom-up manner, i.e. first over-segment scene pointclouds and then group them iteratively with a novel regret mechanism to withdraw incorrect groupings. Arvix. |

|

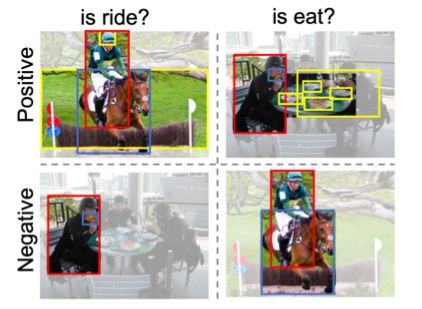

Liang Xu, Yong-Lu Li, Minyang Chen, Yan Hao, Cewu Lu Our proposed PAL-Net has two ingredients for scene graph generation. First we introduce a novel embedding loss for translation embedding in a metric learning manner. Then we take predicates as conditions for contextualmodeling to alleviate noise. ICME. Oral. |

|

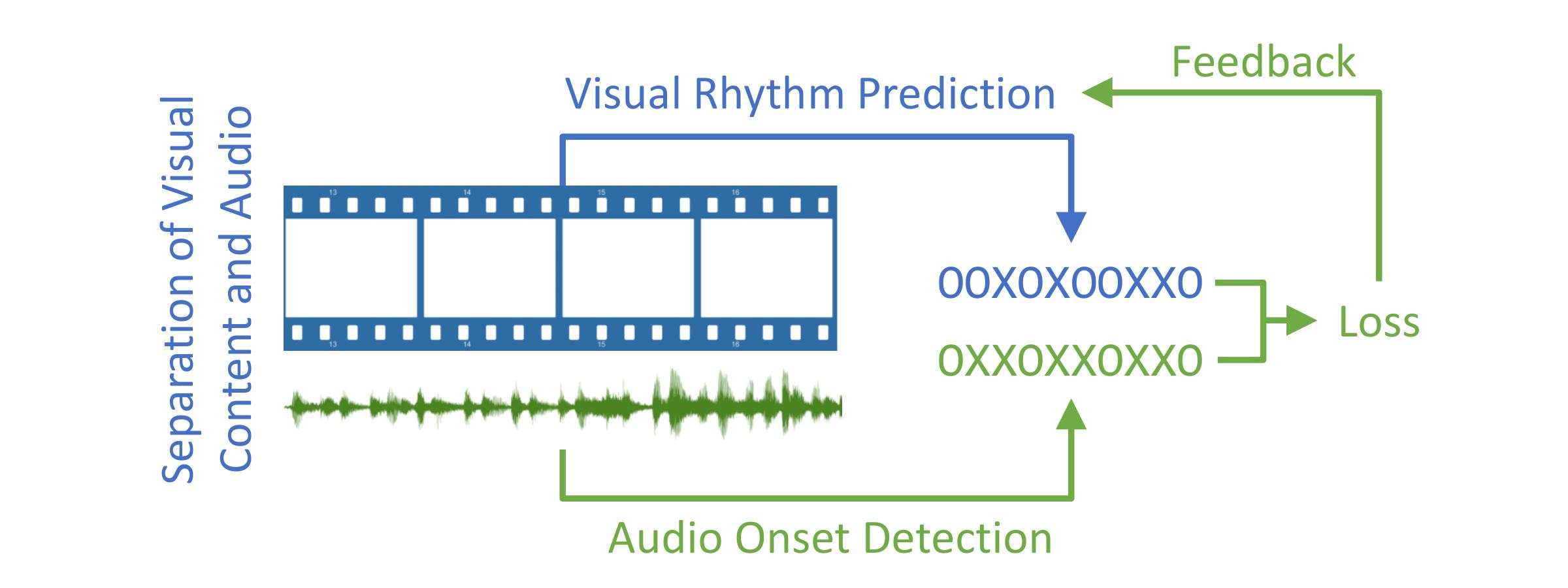

Yutong Xie, Haiyang Wang, Yan Hao, Zihao Xu The paper proposed a data-driven visual rhythm prediction method, in which several visual features are extractedand then fed into an end-to-end neural network to predict the visual onsets. MVA |